Let’s be honest — AI has completely changed how we create content. I can generate a full article in minutes, test multiple versions, and speed up my workflow in ways that felt impossible just a few years ago.

But the more I work with AI tools, the more one thing becomes clear: just because we can generate content fast doesn’t mean we should do it without thinking.

If you’re using AI for marketing in 2026 (and chances are, you are), here are the ethical questions you really need to keep in mind — in plain, practical language.

Originality: Is AI Content Really “Yours”?

This is usually the first question people ask me.

AI doesn’t think or create in the human sense. It predicts text based on patterns it learned from massive datasets. That means it can sometimes generate content that feels… familiar.

From my experience, AI is great at:

- structuring ideas

- drafting content quickly

- helping you get past the blank page

But originality still comes from you.

What I do:

I treat AI output as a first draft. I rewrite key parts, add my own opinions, and shape the message so it actually sounds like me — not like every other AI-generated article online.

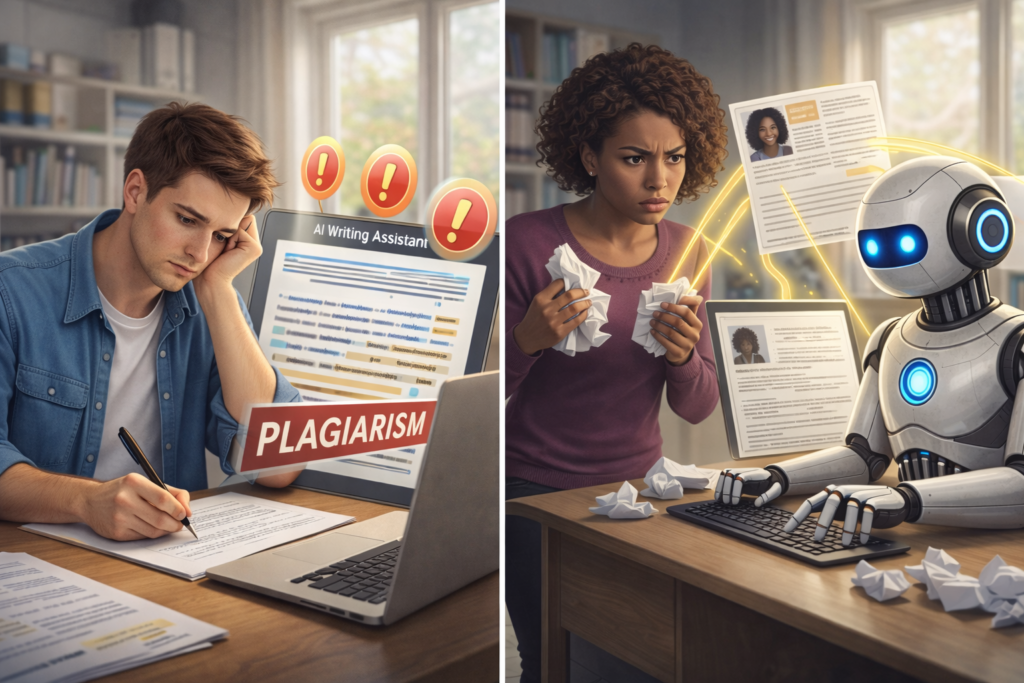

Plagiarism: A Risk You Shouldn’t Ignore

Even if you didn’t intend to copy anyone, publishing something that’s too close to existing content can hurt your brand — and your rankings.

AI tools don’t check intent. They just generate text.

If you’re serious about your content:

- always run AI text through a plagiarism checker

- read it carefully (yes, actually read it)

- rewrite anything that feels generic or suspicious

Trust me — fixing this before publishing is much easier than dealing with problems later.

Copyright: Who Owns AI-Generated Content?

This part still confuses a lot of marketers — and honestly, the rules are still evolving.

Here’s what I’ve learned:

- In some countries, AI-only content may not be protected by copyright

- Some tools have specific terms about content ownership

- If you feed copyrighted material into AI, you may be responsible

My rule of thumb:

The more human input and editing I add, the safer I feel legally and ethically.

Always check the terms of the AI tool you’re using — especially if you’re publishing content commercially.

Authenticity: Why “Good Enough” Isn’t Enough Anymore

One thing I hear a lot is:

“This content is fine. It works.”

And yes — it might work.

But in 2026, fine isn’t what builds trust.

Readers can tell when content feels:

- rushed

- generic

- disconnected from real experience

If you want people to trust you, you need to sound human. That means:

- sharing your perspective

- admitting uncertainty sometimes

- explaining why something matters, not just what it is

AI can help — but authenticity is still your job.

Transparency: Should You Tell People You Use AI?

Short answer? Yes — when it matters.

I don’t think every blog post needs a big AI disclaimer. But if AI played a major role, being open about it can actually build trust, not destroy it.

A simple line like:

“This article was created with the help of AI tools and edited by me.”

is often enough.

People don’t hate AI. They hate being misled.

AI Summaries vs Original Work: Where It Gets Tricky

This is a sensitive topic — especially for creators.

AI summaries can be useful, but if your content is just:

- summarizing other people’s work

- rewriting what already exists

- adding no new insight

…then you’re not really creating value.

What I try to do instead:

- use summaries as a base

- add my own examples and opinions

- link to original sources when relevant

That way, AI supports my work — it doesn’t replace someone else’s.

Using AI Responsibly (Without Overthinking It)

You don’t need to be perfect to be ethical.

You just need to be intentional.

Here’s my simple checklist:

- I edit everything before publishing

- I add my voice and experience

- I check originality

- I’m honest with my audience

- I use AI to assist — not to hide

That balance is what makes AI a partner, not a shortcut.

Final Thoughts

AI isn’t the problem. How we use it is.

If you treat AI as a tool that helps you think, write, and work faster — while keeping your values, voice, and responsibility — you’re already ahead of most marketers.

The future of content isn’t human or AI.

It’s human with AI — done thoughtfully.

FAQ: AI Content Ethics in 2026

❓ Is AI-generated content ethical to use in marketing?

Yes — as long as AI is used as a support tool, not a replacement for human thinking. Ethical use means editing the content, adding your own insights, and being responsible for what you publish.

❓ Can AI-generated content be considered original?

AI content can be unique, but originality comes from human input. Without editing, opinion, or experience added by you, AI text is often generic and lacks true originality.

❓ Is AI content plagiarism?

Not by default, but it can be risky. AI may unintentionally generate text similar to existing content. That’s why I always recommend checking AI-generated content with plagiarism tools and rewriting sensitive sections.

❓ Who owns AI-generated content?

In most cases, you can use the content commercially — but ownership rules depend on:

- local copyright laws

- the AI tool’s terms of service

- how much human input you added

Always check the tool’s policy before publishing.

❓ Should I tell readers that I use AI?

You don’t have to disclose it everywhere, but transparency builds trust. If AI played a major role, a short note explaining that the content was AI-assisted and human-edited is a good practice.

❓ Can AI summaries replace original creators’ work?

They shouldn’t. Ethical AI use means adding value, not just summarizing or rephrasing someone else’s content. Whenever possible, link to original sources and contribute your own perspective.

❓ Will Google penalize AI-generated content?

No — Google doesn’t penalize AI content just for being AI. What matters is quality, usefulness, and originality. Low-effort, mass-generated content is the real problem.

All BLOG sections: